|

Oct 08, 2016. HORTONWORKS: SANDBOX SETUP (HANDS ON STEP BY STEP) By www.HadoopExam.com Note: These instructions should be used with the HadoopExam Apache Spar k: Professional Trainings. Where it is executed and you can do hands on with trainer. HORTONWORKS HDPCD (Hadoop Developer Certification available with tota l 74 solved problem scenarios.

Introduction

This tutorial walks through the general approach for installing the Hortonworks Sandbox (HDP or HDF) onto Docker on your computer.

Prerequisites

2 Hortonworks Sandbox Installation instructions – VirtualBox on Windows To run the Sandbox you must install one of the supported virtual machine environments on your host machine, either Oracle VirtualBox or VMware Fusion (Mac) or Player (Windows/Linux). In general, the default settings for the environments are fine. The one item that.

Outline

Memory ConfigurationMemory For Linux

No special configuration needed for Linux.

Memory For Windows

After installing Docker For Windows, open the application and click on the Docker icon in the menu bar. Select Settings.

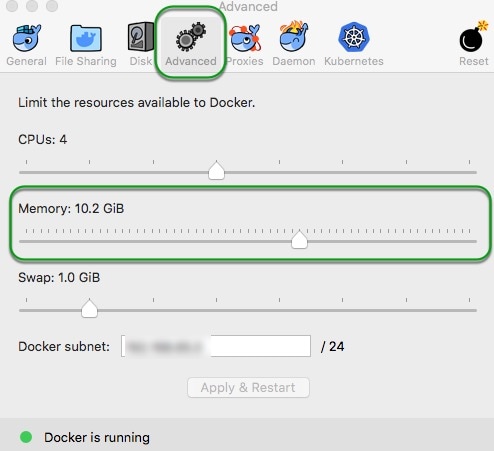

Download ntfs for mac 14. Select the Advanced tab and adjust the dedicated memory to at least 10GB of RAM.

Memory For Mac

After installing Docker For Mac, open the application and click on the Docker icon in the menu bar. Select Preferences.

Select the Advanced tab and adjust the dedicated memory to at least 12GB of RAM.

HDP DeploymentDeploy HDP Sandbox

Install/Deploy/Start HDP Sandbox

In the decompressed folder, you will find shell script docker-deploy-.sh. From the command line, Linux / Mac / Windows(Git Bash), run the script:

Note: You only need to run script once. It will setup and start the sandbox for you, creating the sandbox docker container in the process if necessary.

Note: The decompressed folder has other scripts and folders. We will ignore those for now. They will be used later in advanced tutorials.

The script output will be similar to:

Verify HDP Sandbox

Verify HDP sandbox was deployed successfully by issuing the command:

You should see something like:

Stop HDP Sandbox

When you want to stop/shutdown your HDP sandbox, run the following commands:

Restart HDP Sandbox

When you want to re-start your sandbox, run the following commands:

Remove HDP Sandbox

A container is an instance of the Sandbox image. You must stop container dependancies before removing it. Issue the following commands:

If you want to remove the HDP Sandbox image, issue the following command after stopping and removing the containers:

HDF DeploymentDeploy CDF Sandbox

Install/Deploy/Start CDF Sandbox

In the decompressed folder, you will find shell script docker-deploy-.sh. From the command line, Linux / Mac / Windows(Git Bash), run the script:

Note: You only need to run script once. It will setup and start the sandbox for you, creating the sandbox docker container in the process if necessary.

https://russiannew710.weebly.com/asphalt-6-apk-free-download-for-android-tablet.html. Note: The decompressed folder has other scripts and folders. We will ignore those for now. They will be used later in advanced tutorials.

The script output will be similar to:

Verify CDF Sandbox

Verify HDF sandbox was deployed successfully by issuing the command:

Docking station for mac. You should see something like:

Stop CDF Sandbox

When you want to stop/shutdown your HDF sandbox, run the following commands:

Restart CDF Sandbox

When you want to re-start your HDF sandbox, run the following commands:

Remove CDF Sandbox

A container is an instance of the Sandbox image. You must stop container dependencies before removing it. Mp4 player mac os x. Issue the following commands:

If you want to remove the CDF Sandbox image, issue the following command after stopping and removing the containers:

Enable Connected Data Architecture (CDA) - Advanced Topic

Prerequisite:

Hortonworks Connected Data Architecture (CDA) allows you to play with both data-in-motion (CDF) and data-at-rest (HDP) sandboxes simultaneously.

HDF (Data-In-Motion)

Data-In-Motion is the idea where data is being ingested from all sorts of different devices into a flow or stream. While the data is moving throughout this flow, components or as NiFi calls them “processors” are performing actions on the data to modify, transform, aggregate and route it. Data-In-Motion covers a lot of the preprocessing stage in building a Big Data Application. For instance, data preprocessing is where Data Engineers work with the raw data to format it into a better schema. Data Scientists will focus on analyzing and visualizing the data.

HDP (Data-At-Rest)

Data-At-Rest is the idea where data is not moving and is stored in a database or robust datastore across a distributed data storage such as Hadoop Distributed File System (HDFS). Instead of sending the data to the queries, the queries are being sent to the data to find meaningful insights. At this stage data, data processing and analysis occurs in building a Big Data Application.

Update Docker Memory

Select Docker -> Preferences. -> Advanced and set memory accordingly. Restart Docker.

Run Script to Enable CDA

When you first deployed the sandbox, a suite of deployment scripts were downloaded - refer to Deploy HDP Sandbox as an example.

In the decompressed folder, you will find shell script enable-native-cda.sh. From the command line, Linux / Mac / Windows(Git Bash), run the script:

The script output will be similar to:

Further Reading

Appendix A: TroubleshootingDrive not shared

No space left on device

Port Conflict

While running the deployment script, you may run into conflicting port issue(s) similar to:

Hortonworks Hdp Sandbox Download

In the picture about, we had a port conflict with 6001.

Go to the location where you saved the Docker deployment scripts - refer to Deploy HDP Sandbox as an example. You will notice a new directory sandbox was created.

Hortonworks Sandbox Install

Verify sandbox was deployed successfully by issuing the command:

You should see something like:

In the next 4 articles, we’ll launch Hadoop MapReduce jobs (WordCount on a large file) using the HortonWorks Sandbox.

Getting started with the VMInstall and launch

The Sandbox by Hortonworks is a straightforward, pre-configured, learning environment that contains the latest developments from Apache Hadoop, specifically the Hortonworks Data Platform (HDP). The Sandbox comes packaged in a virtual environment that can run in the cloud or on your machine. Sandbox also offers a data-in-motion framework for IoT solutions called Hortonworks Data Flow (HDF). To configure Hadoop from scratch on a Linux VM, this tutorial might be useful: https://www.tutorialspoint.com/hadoop/hadoop_enviornment_setup.htm

Hortonworks offers a way to use Hadoop Tools connecting to a Virtual Machine in SSH for command lines interfaces. Numerous web interfaces are also available.

The sandbox can be downloaded from here.

Hortonworks Sandbox Ssh

Download the HDP Sandbox. https://tonesite939.weebly.com/wd5000p032-driver-for-mac.html. This Sandbox makes it easy to get started with Apache Hadoop, Apache Spark, Apache Hive, Apache HBase, Druid and Data Analytics Studio (DAS). Choose the VirtualBox installation type.

Fill in the form and download the version 2.6.5 of the Sandbox. The sandbox requires around 15 Go of space.

Once this is done (the download might take a long time) :

The first boot takes a while, so time for a break!

Access the Sandbox

The application is now ready to be used :

User

Once started, the Sandbox is accessible from your local computer, in SSH, on the Port 2222. We will be using the username:

raj_ops and password raj_ops as it is pre-registered.

There are many pre-configured users for the HortonWorks Sandbox, including :

SSH

Launch your terminal and access the Sandbox using SSH :

ssh raj_ops@localhost -p 2222

If you are asked whether you’d like to permanently add 2222 to the list of known hosts, say Yes.

Splash Page

We should be able to access a graphical view of the services available on a Hadoop cluster. It’s called the VirtualBox Splash Page, and it can be accessed on :

http://localhost:8080

You will be asked to log-in :

Then, after loading, the page should look like this :

You are now connected to the Sandbox and you can access the HDFS file system. We’ll dive deeper into this in the next articles.

Conclusion: I hope this tutorial was clear and helpful. I’d be happy to answer any question you might have in the comments section.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2020

Categories |

RSS Feed

RSS Feed